Quick and easy VPN setup using OpenVPN Docker image, on Amazon Linux 2023.

References

Installation steps

Install docker on amazon linux 2023

dnf update -y

dnf install docker -y

systemctl enable docker

systemctl start docker

OpenVPN Access Server Docker Image

# see: https://openvpn.net/as-docs/docker.html#run-the-docker-container

docker pull openvpn/openvpn-as

docker run -d \

–name=openvpn-as –cap-add=NET_ADMIN \

-p 943:943 -p 4443:4443 -p 1194:1194/udp \

-v /root/openvpn-server:/openvpn \

openvpn/openvpn-as

# Modify ports and hostname as appropriate

cd /root/openvpn-server/etc

vim ./config-local.json

docker restart openvpn/openvpn-as

# Get Temp password

docker logs openvpn-as | grep -i “Auto-generated pass”

# Scroll to find the line, Auto-generated pass = “[password]”. Setting in db..

Configure your OpenVPN services

# Use the generated password sign in to the Admin Web UI.

# username: openvpn

https://[my_hostname_or_pubip].com:943/admin/

# Check the hostname setting.. put in yourhostname…

https://[my_hostname_or_pubip]:943/admin/network_settings

# Stop the VPN services and start to ensure changes loaded and persistent:

https://[my_hostname_or_pubip]:943/admin/status_overview

# Create a user and a new Token Url for the user to import the profile

Windows Client set up

# Install with winget:

winget install -e –id OpenVPNTechnologies.OpenVPNConnect

# Once installed, get the token which will be something like:

openvpn://https://[my_hostname_or_pubip]:043/ConnectClient/[token].ovpn

# Put in browser and should open up the OpenVPN client and import the profile, and connect

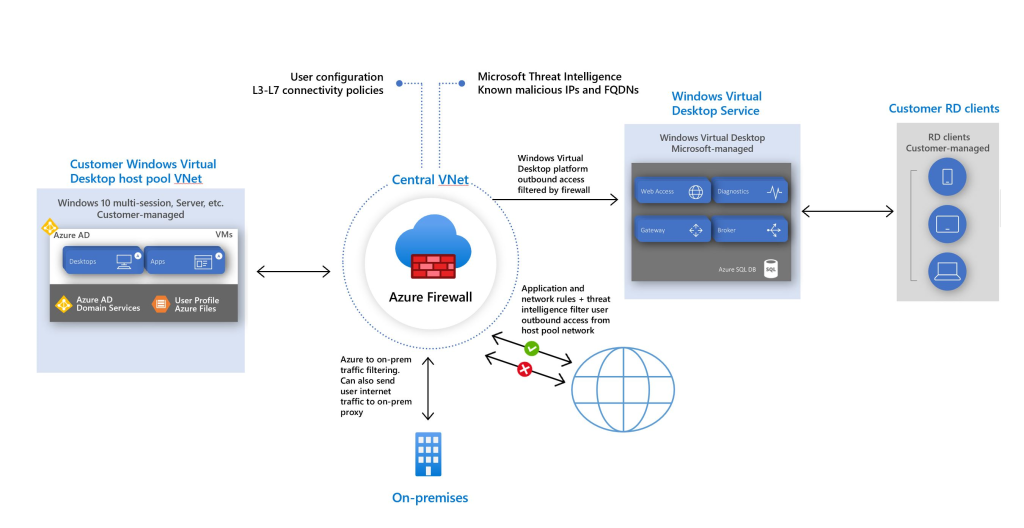

Checkout your traffic routing